A math score can be useful, but it is rarely the full story.

For students preparing for SAT Math, AP Calculus AB, or GAT Quantitative, a raw score shows the result of one attempt. It does not always explain why the result happened, whether the student can repeat it, or what should be done next.

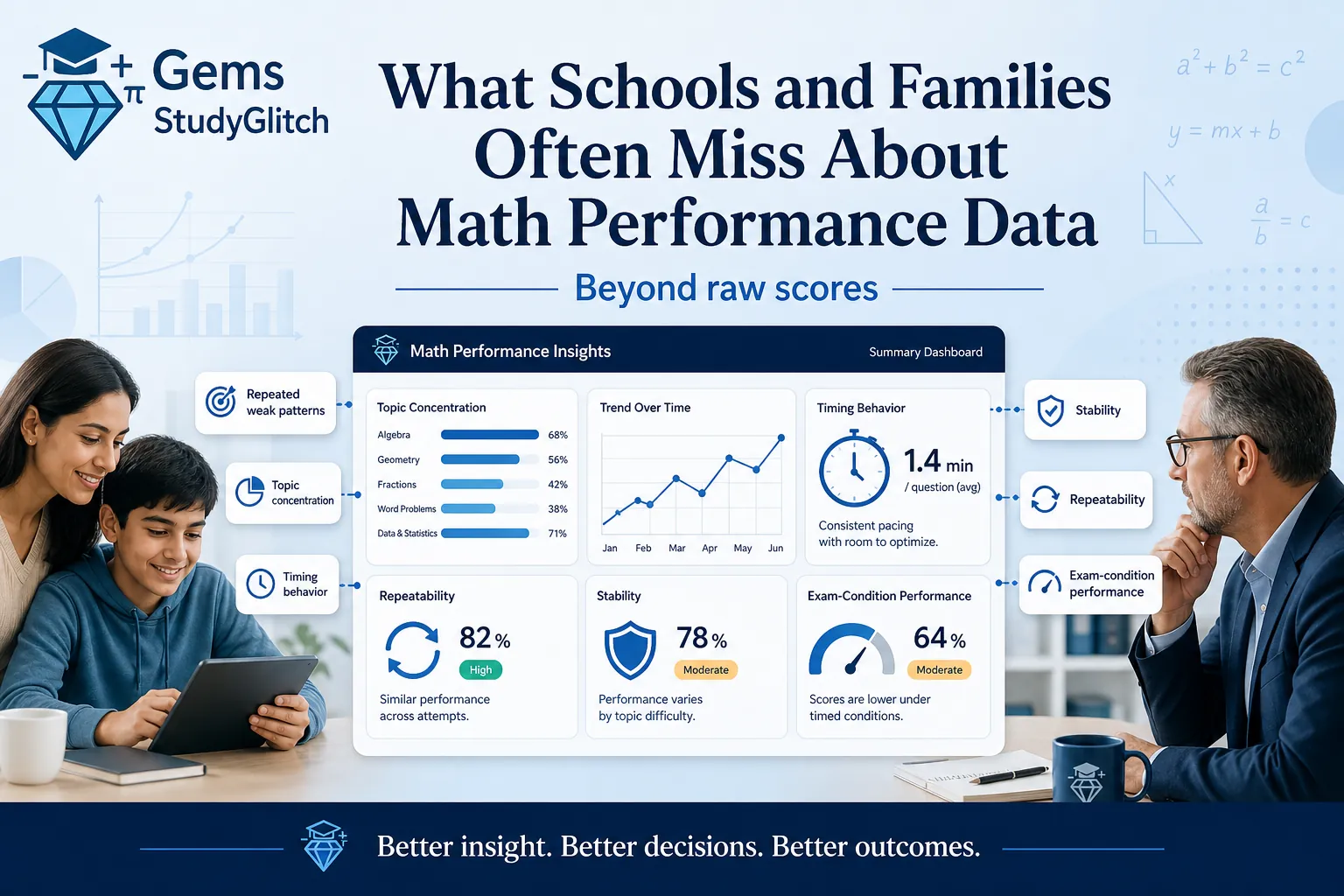

This is where schools and families often miss the deeper meaning of math performance data.

A student may score 70 percent, but that number alone does not show whether the weakness is algebra, timing, graph interpretation, careless mistakes, or exam pressure. Another student may score high once, but that does not always mean the performance is stable. A third student may look average overall while carrying one serious topic weakness that keeps limiting score improvement.

Good performance data should help schools, parents, and students understand the pattern behind the score.

Why raw scores are incomplete

A raw score gives a quick answer, but math performance needs more context.

If a student gets 32 out of 44 on a Digital SAT Math practice test, the score gives a result. It does not explain whether the student lost marks in algebra, advanced math, geometry, word problems, or data analysis. It also does not show whether the student ran out of time, guessed at the end, or made repeated mistakes in one topic.

The same applies to AP Calculus AB and GAT Quantitative.

An AP Calculus AB student may lose marks because they do not understand integration, but they may also lose marks because they cannot transfer the same idea into a graph, table, or AP Calculus FRQ context. A GAT Quantitative student may understand the math but lose time because they cannot recognize question patterns quickly enough.

The score is the outcome. The performance data should explain the cause.

Repeated weak patterns

One weak result can happen for many reasons.

A student may be tired, distracted, unfamiliar with the test format, or surprised by a difficult question. But when the same weakness appears repeatedly, it becomes more important.

Repeated weak patterns are one of the strongest signals in math performance data.

If a student repeatedly misses linear equations in SAT Math, applications of derivatives in AP Calculus AB, or ratio questions in GAT Quantitative, the issue is not random. It is a pattern that should guide the study plan.

Schools and families should look for:

- Topics that keep appearing as weak

- Skills that remain unstable after review

- Question types that repeatedly cause errors

- Mistakes that return under time pressure

- Weaknesses that affect multiple tests

A good report should not treat every mistake equally. Repeated mistakes deserve more attention because they are more likely to affect future performance.

Topic concentration

Not every weak score comes from broad weakness.

Sometimes a student’s score is being held back by a small number of topics. This is called topic concentration. The student may be strong in many areas but consistently lose marks in one or two important skills.

This matters because the solution is different.

If weakness is broad, the student may need foundation repair across several areas. If weakness is concentrated, the student may need targeted practice in a smaller set of topics.

For SAT Math, a student may be strong overall but lose repeated marks in advanced math or worded algebra. For AP Calculus AB, a student may understand derivative rules but struggle with accumulation, integrals, or graph interpretation. For GAT Quantitative, a student may be comfortable with arithmetic but lose marks in geometry or percentages.

Topic concentration helps answer a practical question:

What should the student work on first?

Without that information, students may waste time reviewing everything equally.

Timing behavior

Timing is one of the most misunderstood parts of math performance.

A student’s score may look like a knowledge problem, but the real issue may be pacing. Another student may finish quickly but lose marks because they rush. Both students need different support.

Math performance data should show timing behavior, not just accuracy.

For Digital SAT Math, timing can reveal whether a student spends too long on multi-step algebra, graph questions, or word problems. For AP Calculus AB, it can show whether the student slows down on application questions or AP Calculus FRQ-style reasoning. For GAT Quantitative, it can show whether the student is moving fast enough across short quantitative questions.

Timing behavior can show:

- Whether the student finishes the test

- Which question types take too long

- Whether late-test accuracy drops

- Whether rushed answers create careless mistakes

- Whether timing improves across attempts

This helps schools and parents understand whether the student needs content review, timing practice, or exam strategy.

Stability

A single score does not always show stability.

A student may score well once and then drop on the next attempt. Another student may slowly improve but still fluctuate. A third student may have stable performance even if the current score is not yet high.

Stability matters because exam performance should become reliable.

For SAT Math, AP Calculus AB, and GAT Quantitative, students need to perform under pressure, not only during relaxed practice. A stable student can repeat their performance across different tests and conditions. An unstable student may understand some topics but still struggle when the format, timing, or difficulty changes.

Schools and families should look at:

- Score movement across attempts

- Topic accuracy across attempts

- Timing consistency

- Repeated strong areas

- Repeated weak areas

- Whether improvement is stable or temporary

A student who improves from 55 percent to 70 percent once may be moving forward. But if the next two attempts return to 56 percent, the improvement is not yet stable.

Repeatability

Repeatability is different from one-time success.

A student may get a strong score because the test matched familiar topics, because they guessed well, or because the question style was comfortable. That does not always mean the student is ready.

Repeatability asks a deeper question:

Can the student perform again?

This is especially important before major exams. Students should not rely on one high practice score. They should be able to repeat strong performance across multiple attempts, different question sets, and timed conditions.

For AP Calculus AB, repeatability is especially important because students may do well on direct derivative or integral questions but struggle when the same concept appears in a graph, table, worded context, or FRQ. For Digital SAT Math, repeatability matters because question wording and difficulty can shift. For GAT Quantitative, repeatability matters because speed and pattern recognition need to hold across many question types.

Good performance data should show whether a student’s strength is consistent or fragile.

Exam-condition performance

Practice at home and performance under exam conditions are not the same.

A student may understand a topic during review but lose marks when questions are mixed, timed, and unfamiliar. This does not mean the student did not study. It means exam-condition performance needs to be measured separately.

Exam-condition performance includes:

- Timed practice

- Mixed topics

- Realistic question difficulty

- Pressure across a full test

- Accuracy after fatigue

- Ability to recover after difficult questions

This matters because exams do not test topics in isolation forever. They combine skills, change wording, and require decisions under time pressure.

The StudyGlitch PowerCenter supports this layer by helping students test performance after topic review. The goal is not just to practice more questions. The goal is to see whether learning holds under test-like conditions.

Why schools need deeper data

Schools often need more than individual scores.

If many students are weak in the same topic, that is not only a student issue. It may indicate that the topic needs reteaching, more practice, or a different explanation. If many students lose accuracy near the end of timed tests, timing strategy may need attention. If students perform well in lessons but poorly in mixed practice, transfer may be the problem.

For schools and learning programs, StudyGlitch can also work as an EdTech SaaS layer that supports diagnostic-based learning, practice tracking, and performance reporting inside an existing academic path.

Deeper performance data can help schools understand:

- Which topics are weak across a group

- Which students may be at academic risk

- Which skills need reteaching

- Whether timing is affecting performance

- Whether improvement is happening across attempts

- Whether practice is producing stable results

This kind of information helps schools make better academic decisions without relying only on general impressions.

Why families need deeper data

Families often want to know whether the student is improving.

But improvement is not always obvious from one score. A student may have the same overall score while improving in one topic and declining in another. Another student may improve accuracy but still struggle with timing. A third student may finish more questions but make more rushed mistakes.

Parents need data that explains the situation clearly.

Good performance data helps families understand:

- Whether the student is improving

- Which topics are blocking score improvement

- Whether the student needs more practice or better review

- Whether timing is a serious issue

- Whether tutoring is needed

- What the student should do next

This is why the StudyGlitch Diagnostic Test can be useful at the beginning of preparation. It helps families see the starting point before choosing a study plan.

Why students need deeper data

Students often judge themselves by the final score.

That can be discouraging if the score does not improve quickly. It can also be misleading if the score improves once but the weakness is still present.

Deeper performance data helps students study with more direction.

Instead of thinking “I am bad at math,” the student can see a more specific message:

- I need to repair algebra

- I need to slow down on rushed questions

- I need to practice AP Calculus graph interpretation

- I need to improve GAT timing

- I need to repeat strong performance across more tests

This makes improvement feel more manageable.

The student is no longer fighting the whole subject. They are working on specific, visible parts of performance.

What meaningful math performance data should include

A useful performance report should not be overloaded, but it should be complete enough to guide action.

The best reports usually include:

- Overall score

- Topic-level performance

- Repeated weak patterns

- Timing profile

- Performance across attempts

- Stability and repeatability

- Exam-condition performance

- Recommended next steps

This combination gives a clearer view than a score alone.

It helps parents, students, tutors, and schools understand not only what happened, but what should happen next.

How StudyGlitch thinks about performance data

StudyGlitch is built around the idea that math preparation should be structured and measurable.

The goal is not only to give students more questions. The goal is to help them understand where they stand, repair weak areas, test performance, and track whether the result is changing.

That is why StudyGlitch connects diagnostic-based learning, guided study, PowerCenter practice, reporting, and tutoring support into one preparation path.

You can learn more about the platform on the About StudyGlitch page.

Or, reach out for support on Contact StudyGlitch.

A better question than “What was the score?”

The most useful question is not only, “What was the score?”.

A better question is:

What does this score reveal?

That question leads to better decisions. It helps schools identify learning gaps. It helps parents understand the real situation. It helps students focus on the next step instead of repeating random practice.

For SAT Math, AP Calculus AB, and GAT Quantitative preparation, the score matters. But the pattern behind the score matters more.

Related reading:

- How Parents in Saudi Arabia Can Track Real Academic Progress

- Why Some Students Score Well Once but Cannot Repeat It in Math Exams

Frequently Asked Questions

Why are raw scores not enough in math performance data? Raw scores show the result of one attempt, but they do not explain repeated weak patterns, topic concentration, timing behavior, stability, repeatability, or exam-condition performance.

What should schools look for in math performance data? Schools should look for group-level weak topics, repeated mistake patterns, timing issues, students at risk, improvement across attempts, and whether performance is stable under test conditions.

What should parents look for in a math progress report? Parents should look for topic weaknesses, repeated mistakes, timing behavior, score movement over time, and clear next steps for SAT Math, AP Calculus AB, or GAT Quantitative preparation.

Why does repeatability matter in math exams? Repeatability matters because one strong score does not always mean the student is ready. Students need to perform consistently across different tests, question types, and timed conditions.

How can diagnostic-based learning help students improve? Diagnostic-based learning helps students identify weak topics before studying, connect practice to the right skills, and measure whether their performance improves over time.